NVIDIA was the first of the large scale technology providers to see the opportunity for artificial intelligence (AI), particularly as applied to autonomous machines. This early focus allowed them to build up a set of skills, tools, and focused hardware that substantially enhanced the AI efforts for their customers, including IBM, another AI pioneer. As others entered the market, the need for benchmarks recently drove the introduction MLPERF—a set of machine learning benchmarks that could be used to determine the performance of this technology across the growing number of vendors participating in this segment.

As you would expect, these benchmarks show NVIDIA as massively dominant across every measured segment, and, for now, the only vendor even willing to benchmark in several of the newer, more critical benchmarks for the growth of this still-nascent industry.

MLPERF

One of the reasons a benchmark like this is critical is because with AI both training and inference are hard. There is also a growing diversity in tools, approaches, and hardware coupled with an increasing complexity of the neural nets that found these solutions. The solutions themselves require both extensive pre-and post-processing, and they have to fall into a diverse ecosystem of autonomous machines, smart devices, and cloud and data center offerings.

Currently, in a pre-release form designated by the 0.5 version number of the tool, this is a complete benchmark set. There are two Image Classification benchmarks based on MobileNet-v1 and ResNet-50 v1.5, both production level tools. There are two Object Detection Benchmarks one low resolution based on Single-Shot Detector with MobilNet-v1 and one high resolution based on Single-Shot Detector ResNet-34. Finally, there is one translation test GNMT.

The tests run both in Offline Scenario and a Server Scenario. These different approaches reflect what a stand-alone AI can do and what a cloud-like AI service might provide. They also run in single-stream and multi-stream to showcase what a focused system could do, like say a security camera, and what a complex general-purpose system could do, like in an autonomous car. They do this for both Commercially available and preview SOCs (systems on a chip).

NVIDIA Results

NVIDIA was impressive both in terms of performance leadership—significantly outperforming all competitors with commercially available solutions—and in their breadth of coverage, participating in all of the testing categories. Google’s interesting TPUv3 had good breadth but demonstrated surprisingly poor performance while Hagana Goya, with their production solution did impressively well in a couple categories but lacked the breadth and NVIDIA still outperformed them overall.

The preview solutions test showcased impressive performance by some NVIDIA competitors, but running prototype code against production code isn’t a good comparison, and the tests were generally limited to the easiest tests of image classification. These results showcase a limited potential risk to NVIDIA whose tools appear to be much broader and available against the limited pre-production showing from the competitors. Typically firms don’t share results from pre-production products once they have production-ready products in market because those results will cause customers to defer purchases until the better product is available. This issue is likely the reason why so few vendors showcased preview products.

Wrapping Up

AI is a force multiplier, and that means performance advantages aren’t linear; they can be logarithmic. This huge potential performance impact from an AI means that even little advantages in performance could have huge benefits to the overall performance of the related intelligent system. In things like autonomous vehicles, this kind of performance advantage could—and I expect will—make the difference between viable solutions and non-viable ones.

The market desperately needed some benchmark to showcase whether our belief that NVIDIA had a massive lead was consistent with facts, and, this time, they confirmed with high confidence that NVIDIA is massively dominant in this space at least against the known competitors. This segment is a hot area, and other companies will certainly enter it over time.

They also showcased some minor risks in terms of preview products, but those products lack the deep toolsets and in-market experience of NVIDIA’s product offerings, and you need a complete solution to compete in this segment.

To add insult to injury NVIDIA also released its new Jetson Xavier NX offering. This SOC is a nano-size AI supercomputer with up to 21 TOPS of AI performance in a 10 to 15-watt power envelope that could revolutionize small autonomous drones and vehicles. NVIDIA isn’t going to make the proverbial “tortoise and hare” mistake and isn’t sitting on their laurels but instead is accelerating into the future. They are going to be impressively hard to catch, let alone pass, in this segment as a result.

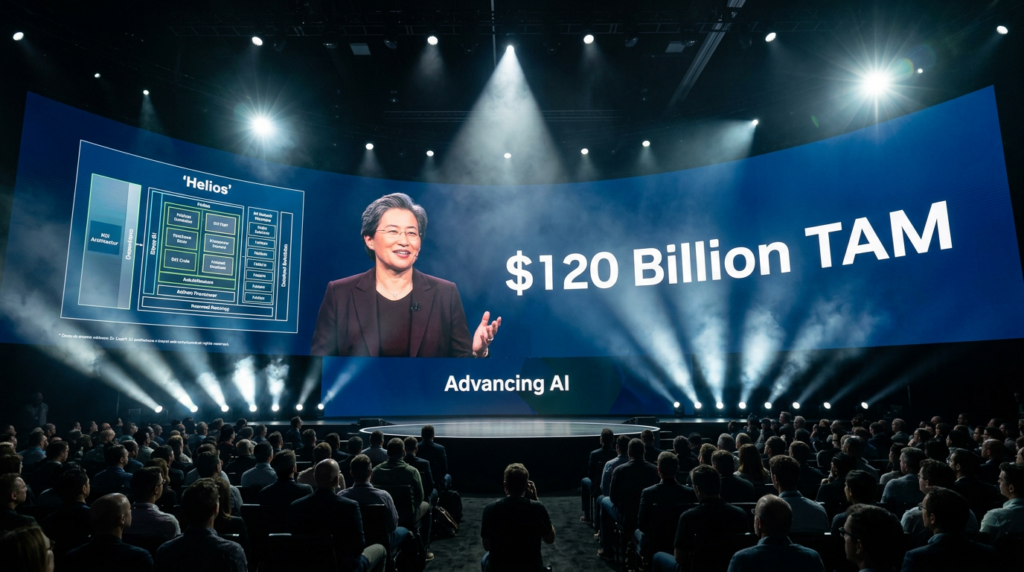

- The AMD Inflection and How Execution and AI Strategy Are Redefining the Semiconductor Hierarchy - May 13, 2026

- The Coming AI Storm and Why AMD’s coming July Event Is the New Industry North Star - May 5, 2026

- Intel’s 18A Redemption and Why the Analysts Are Finally Catching Up to the AI PC Reality and What Grove Would Do to Finish the Fight - April 24, 2026